Introduction: A Watershed Moment for Legal Tech Ethics

The Supreme Court of India has delivered a stern warning to the legal community. Supreme Court AI judgments generated by artificial intelligence cannot be trusted without verification.

In a landmark ruling on February 27, 2026, the Court declared a firm stance. Specifically, citing AI-generated fake judgments constitutes professional misconduct, not mere negligence [^1]. This ruling marks a turning point for AI in Indian legal system.

Artificial intelligence tools like ChatGPT have proliferated across Indian courtrooms since late 2022. Many lawyers now use these tools for research, drafting, and case analysis. However, the recent Supreme Court AI judgments controversy reveals a dangerous trend. Several advocates have submitted fabricated case citations generated by AI tools [^2]. These tools hallucinate non-existent precedents with alarming frequency.

Therefore, the line between technological assistance and professional misconduct has been decisively drawn. Indian lawyers must understand the implications of this ruling immediately. The Court’s zero-tolerance approach could result in severe penalties. In fact, potential debarment looms for violators.

The Supreme Court’s Ruling: Defining Misconduct

The Bench’s Declaration

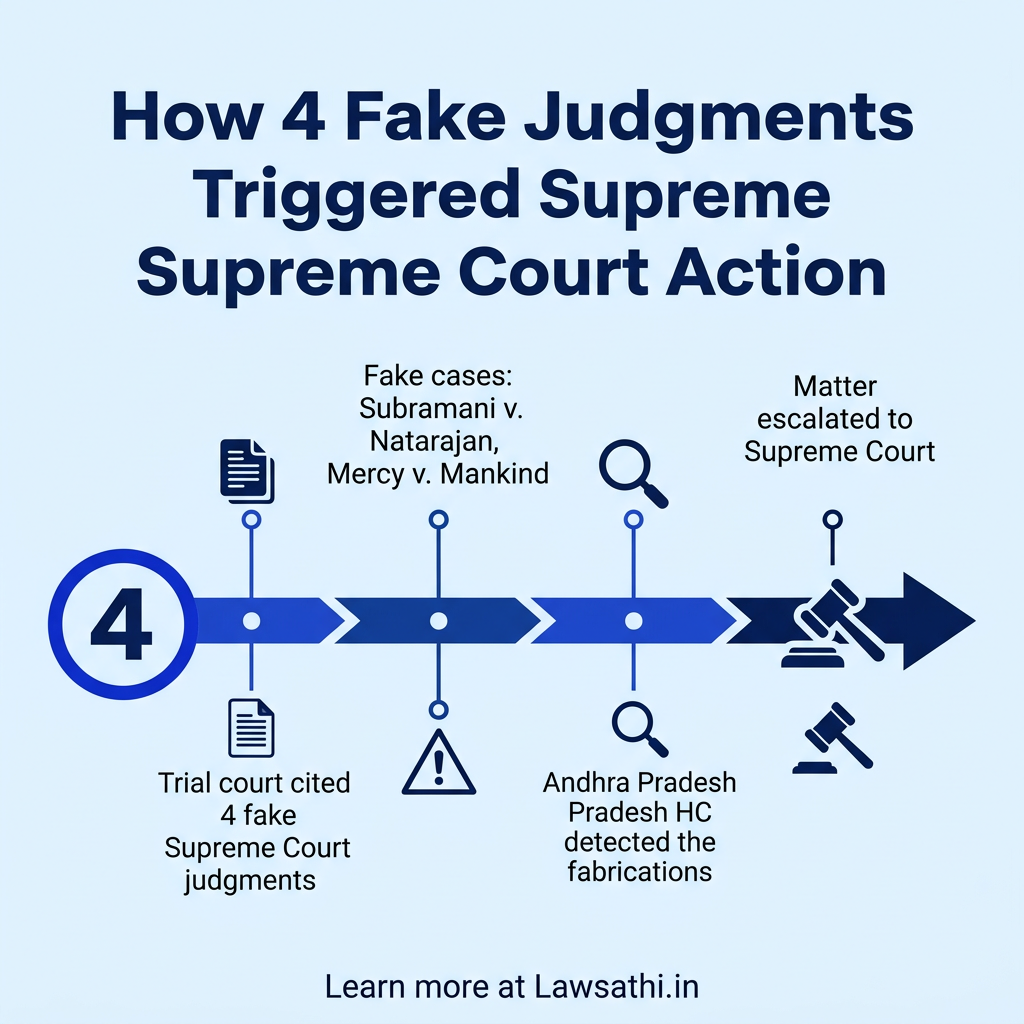

A Bench of Justice PS Narasimha and Justice Alok Aradhe delivered the landmark order. The case, Gummadi Usha Rani & Anr. v. Sure Mallikarjuna Rao & Anr., exposed a serious problem [^3]. A trial court had relied on four non-existent judgments. The Supreme Court’s response was unequivocal and firm.

> “At the outset, we must declare that a decision based on such non-existent and fake alleged judgments is not an error in the decision making. It would be a misconduct and legal consequence shall follow.” [^1]

What Constitutes Misconduct Under the Ruling

The Court explicitly classified several actions as misconduct. First, citing AI-generated fake judgments qualifies as misconduct, not mere error. Second, using AI to draft submissions without verification constitutes professional negligence. Third, attributing fabricated quotes to real judgments amounts to deception of the court [^5].

Furthermore, the Court emphasized that this issue assumes “considerable institutional concern.” The integrity of the adjudicatory process depends on accurate legal citations. When lawyers submit fabricated precedents, the entire justice system suffers. Consequently, public trust in judiciary erodes.

Parties Notified and Consequences Indicated

The Supreme Court issued notices to critical stakeholders. These include the Attorney General for India and the Solicitor General of India [^1]. The Bar Council of India also received notice. Senior Advocate Shyam Divan was appointed as amicus curiae to assist the Court.

Additionally, the Bench indicated it would examine “consequences and accountability.” This suggests potential disciplinary action against lawyers who submit fake citations. The message is clear and unambiguous. Legal consequences will follow for such misconduct.

The Case That Triggered the Warning

Background of the Property Dispute

The triggering case originated from a property dispute in Andhra Pradesh. A trial court had appointed an Advocate Commissioner to inspect a disputed property. The defendants objected to the Commissioner’s report, seeking its rejection [^3].

However, the trial court dismissed these objections on August 19, 2025. Crucially, the court relied on four Supreme Court judgments to support its decision. These citations appeared authoritative and legitimate on the surface.

The Four Fabricated Citations

Upon investigation, all four citations proved to be complete fabrications. The fake judgments included Subramani v. M. Natarajan (2013) 14 SCC 95 [^3]. Additionally, Chidambaram Pillai v. SAL Ramasamy (1971) 2 SCC 68 was cited. Lakshmi Devi v. K. Prabha (2006) 5 SCC 551 also appeared in the order. Furthermore, Gajanan v. Ramdas (2015) 6 SCC 223 rounded out the fake citations [^3]. None of these cases exist in any official legal database.

Detection of the AI Hallucinations

The Andhra Pradesh High Court first detected the problem. Petitioners contended that the citations were “non-existent and fake orders.” The High Court verified this claim promptly [^3]. It confirmed the judgments were AI-generated hallucinations. However, the High Court still dismissed the petition on merits. It recorded a word of caution while doing so.

Subsequently, the matter reached the Supreme Court. The apex court recognized the broader institutional crisis at hand. Justice Surya Kant had already flagged an “alarming” trend in February 2026. In one instance, a petition referenced “Mercy v. Mankind” [^4]. This case never existed at all. In Justice Dipankar Datta’s court, multiple fabricated judgments were cited [^29]. All proved to be complete inventions.

Why AI Hallucinations Are Dangerous for Justice

Understanding LLM Hallucinations

Large Language Models like ChatGPT generate outputs that appear authoritative but may be fabricated. These AI systems are trained to predict plausible next words, not to verify facts [^7]. Consequently, they cannot distinguish between real and imagined case citations.

This phenomenon, known as “hallucination,” poses unique risks in legal contexts. The AI produces outputs with unwavering confidence. A lawyer unfamiliar with relevant case law might mistake these fabrications for genuine precedents. Therefore, the risk of error remains high.

Risks to Client Liberty and Financial Stakes

The consequences of fake citations extend far beyond professional embarrassment. In criminal cases, fabricated precedents could affect bail decisions and sentencing outcomes. A client’s liberty might hinge on citations that do not exist [^7].

Similarly, civil disputes involving property rights face serious risks. Financial stakes in commercial disputes worth crores could be influenced by false case law. Contract enforcement and tax assessments may rely on precedents that never existed.

Erosion of Public Trust in Judiciary

Justice BR Gavai articulated the deeper concern eloquently. He asked whether a machine “lacking human emotions and moral reasoning” can grasp legal complexities [^11]. Justice demands empathy, ethics, and contextual understanding. These qualities remain beyond the reach of algorithms.

Moreover, Justice Nagarathna highlighted an additional burden on judges. Even when citations are real, fake quotes are often attributed to judgments [^4]. Judges must now verify every citation meticulously. This undermines the efficiency gains AI was meant to provide.

The Fine Line: AI as a Tool vs. AI as an Authority

Permissible Uses of AI in Legal Practice

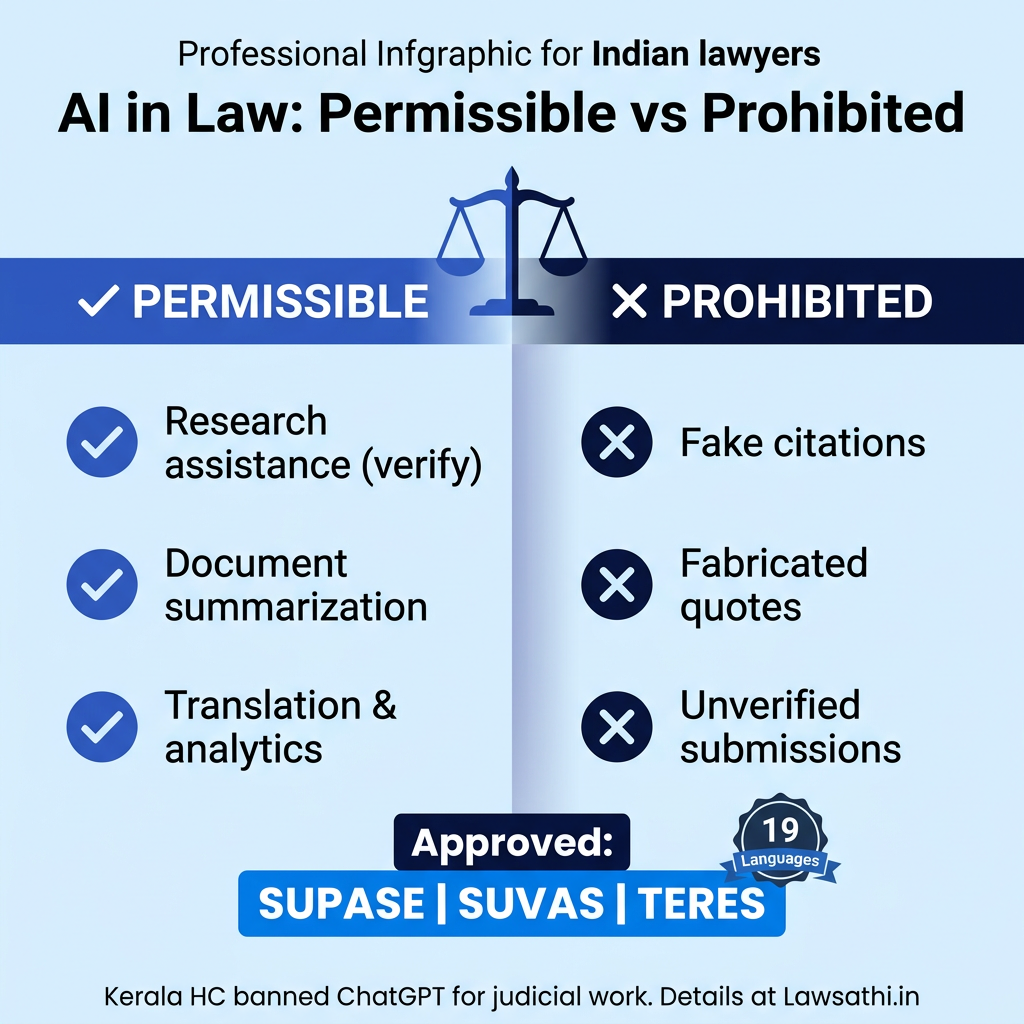

The Supreme Court has not prohibited AI usage entirely. Instead, the Court has drawn a clear distinction. Permissible and prohibited uses are now delineated. AI can serve as a tool for assistance when properly supervised.

Permissible activities include legal research assistance with mandatory verification. Summarization of lengthy documents remains acceptable with human review. Drafting skeleton arguments is allowed when lawyers validate every statement [^12]. Translation services and docket analytics also fall within acceptable parameters.

Supreme Court’s Approved Indigenous AI Tools

The Supreme Court itself has developed and approved certain AI tools. SUPACE assists with case record analysis. SUVAS provides translation into 19 regional languages. TERES offers real-time transcription services [^14]. These tools demonstrate that AI integration can enhance judicial efficiency. However, proper design and oversight remain essential.

Prohibited Uses Under New Standards

In contrast, generating case citations without verification is strictly prohibited. Drafting submissions with fabricated quotes constitutes professional negligence [^12]. Submitting AI output as final work product invites potential debarment. Using cloud-based AI for privileged information breaches confidentiality obligations.

The Supreme Court released a White Paper in November 2025. This document established key principles for AI use. These include mandatory human verification and confidentiality safeguards [^14]. Disclosure requirements were also mandated.

Kerala High Court’s Pioneer Policy

Kerala High Court became the first in India to issue comprehensive AI guidelines. This occurred in July 2025. The policy explicitly states that AI tools shall not be used to arrive at judgments [^32]. Only AI tools approved by the High Court or Supreme Court are permitted. All legal citations must be meticulously verified by judicial officers. Courts must maintain detailed audits of all AI tool usage.

Furthermore, the policy prohibits cloud services like ChatGPT for judicial work [^33]. Deepseek and similar tools are also banned. Violations invite disciplinary proceedings. This framework offers a template for other High Courts to follow.

Practical Guidelines for Indian Law Firms

Mandatory Verification Protocols

Every law firm must implement rigorous verification protocols. Cross-checking with official sources is non-negotiable. Lawyers should verify citations through the Supreme Court Reports at https://scr.sci.gov.in [^16]. The Digital SCR at https://digiscr.sci.gov.in provides authenticated digital copies [^17]. Indian Kanoon at https://indiankanoon.org offers searchable access to case law [^18].

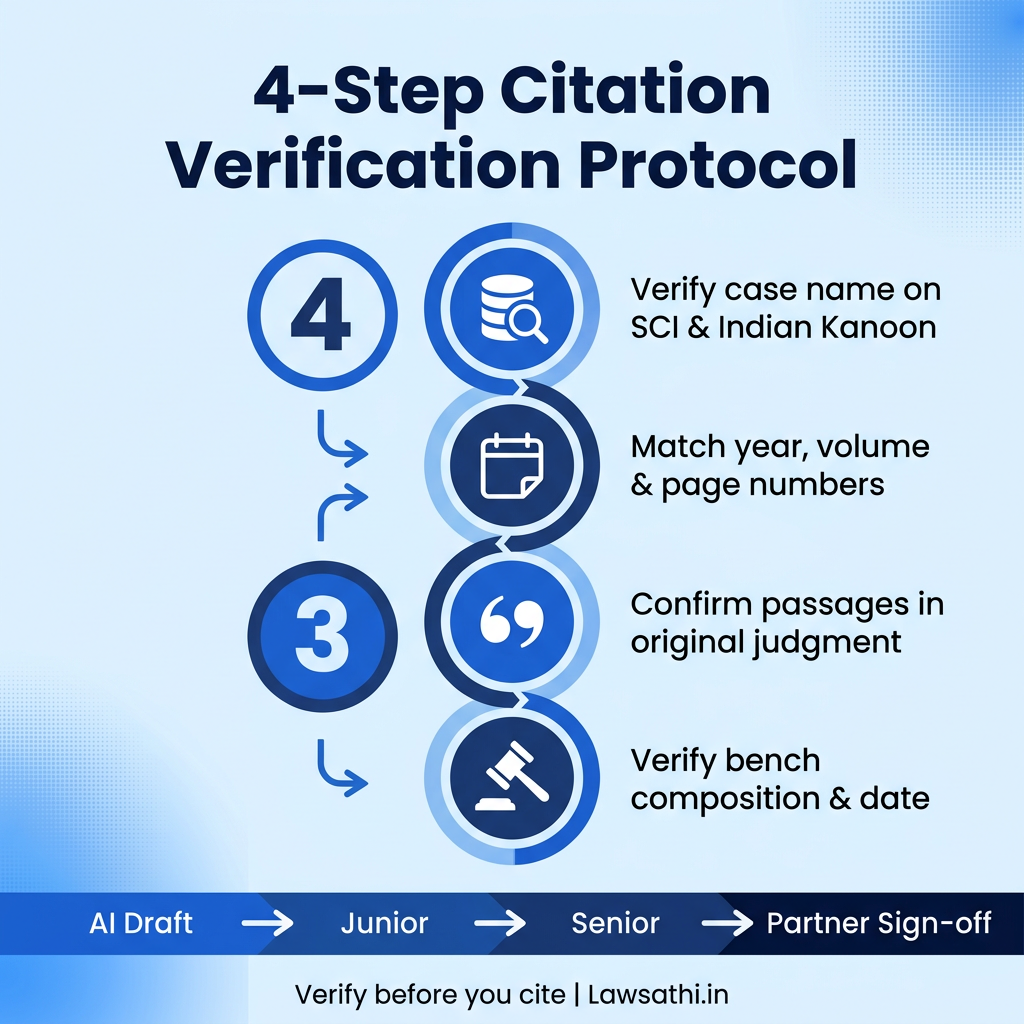

Step-by-Step Citation Verification

First, verify that the case name exists in an official database. Second, check that the year, volume, and page numbers match the official record. Third, confirm that quoted passages appear in the original judgment. Fourth, verify the bench composition and judgment date [^19]. Most importantly, never rely solely on AI-generated summaries or non-official legal blogs.

Human-in-the-Loop Workflows

Law firms should implement mandatory “Human-in-the-Loop” workflows. The recommended process follows a clear chain of accountability. AI drafts should first be reviewed by junior associates. They must check all citations thoroughly. Senior associates then verify legal propositions and arguments. Partners provide final approval and sign-off before filing [^21].

This multi-layered approach ensures accountability at every stage. No AI-generated content reaches the court without human verification.

Training Junior Associates on Ethical AI Use

Junior associates require specific training on AI risks and verification methods. Essential training components include understanding AI hallucination risks thoroughly. Associates must learn to use official legal databases proficiently [^22]. Verification methodology should become second nature. Professional ethics in the AI age must be emphasized repeatedly. Additionally, client confidentiality with AI tools requires careful attention.

Conclusion: Upholding Integrity in the Digital Age

The Supreme Court’s Zero-Tolerance Message

The Supreme Court AI judgments ruling establishes an unambiguous standard. Using AI-generated fake judgments constitutes professional misconduct, not mere negligence. Courts will examine consequences and accountability rigorously. The Bar Council of India is expected to issue formal guidelines soon [^11].

Regulatory Developments Ahead

Currently, the Advocates Act of 1961 and BCI Rules remain silent on AI usage. India lacks a pan-India framework for lawyers, unlike the USA and UK [^12]. However, the Artificial Intelligence (Ethics and Accountability) Bill, 2025 has been introduced in Parliament. The BCI is actively considering AI guidelines for the legal profession.

The Path Forward for Indian Lawyers

The Supreme Court’s message is unequivocal. AI serves as a tool for assistance, not an authority to be blindly trusted. The integrity of India’s judicial system depends on lawyers who verify. Judges must scrutinize citations carefully. The Bar Council must regulate emerging technologies responsibly.

Technology should aid judgment, not replace it. Indian lawyers who embrace this principle will navigate the digital age successfully. Those who shortcut verification processes risk their careers and their clients’ interests.

Stay compliant and efficient with LawSathi. Our AI-powered research tools provide verified citations linked directly to official court records, ensuring you never risk misconduct. Start your free trial today.